Overview

What this project was about

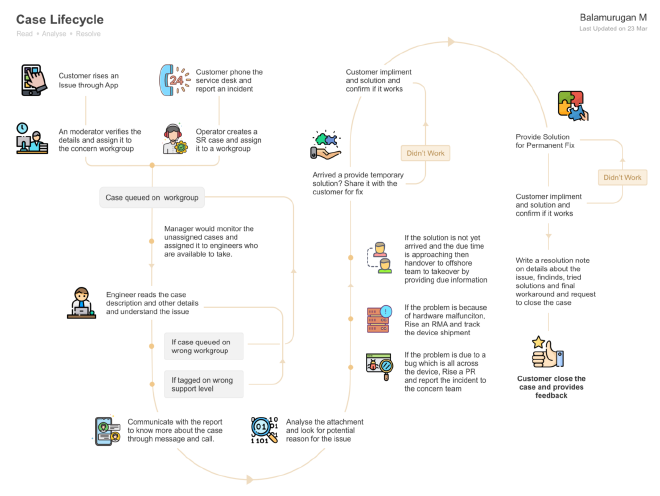

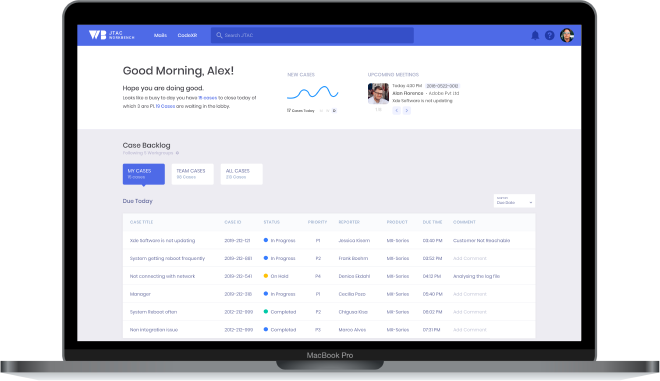

The technical support vertical generated over 60% of Juniper Networks' revenue — yet engineers were spending more than 3 hours on average to resolve a single case. The organisation wanted to introduce automation and streamline workflows to reduce that number significantly. I led the research and design work to understand how support engineers actually worked, and what a better workbench would look like for them.

The Challenge

Starting With the Business Reality

The support vertical was Juniper's most revenue-critical function, but engineers were working in a fragmented toolset that forced them to context-switch constantly. Cases, notes, attachments, communication threads, and file viewers all lived in separate systems. The mandate was clear: reduce case turnaround time. The path to get there required understanding exactly where that time was going.

Research & Discovery

Initial desk research covered wikis, support documentation, HR hiring documents, and networking industry forums — building context before going into the field. The primary research involved in-person workplace observations with support engineers and formal interviews conducted by the research team.

User Persona

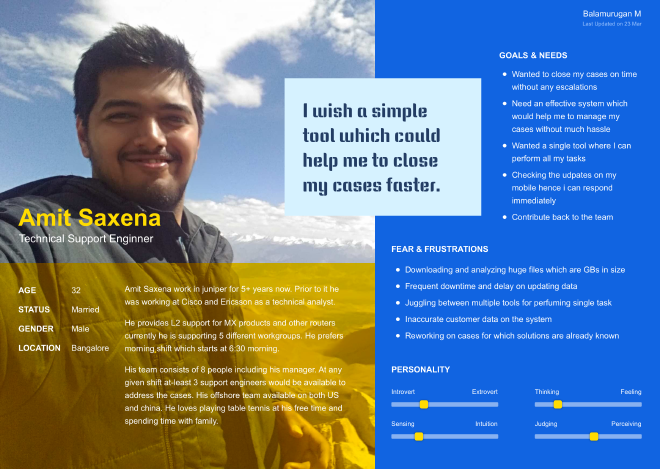

To keep the team anchored on real users rather than assumptions, we created Amit Saxena — a detailed persona grounded in research observations — and used him as the lens for every design decision.

Design Approach: Storyboarding to IA to Wireframes

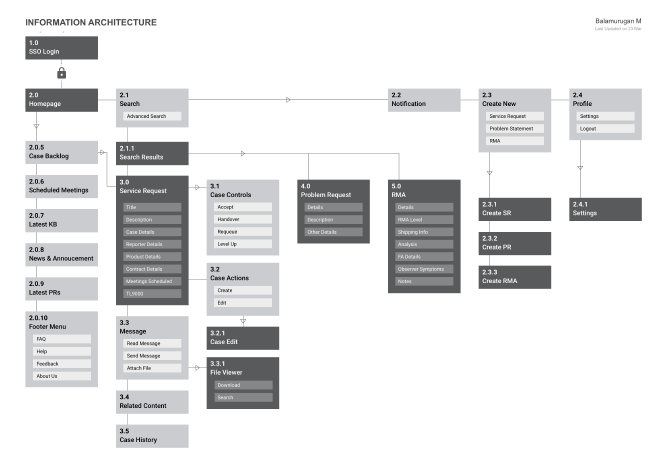

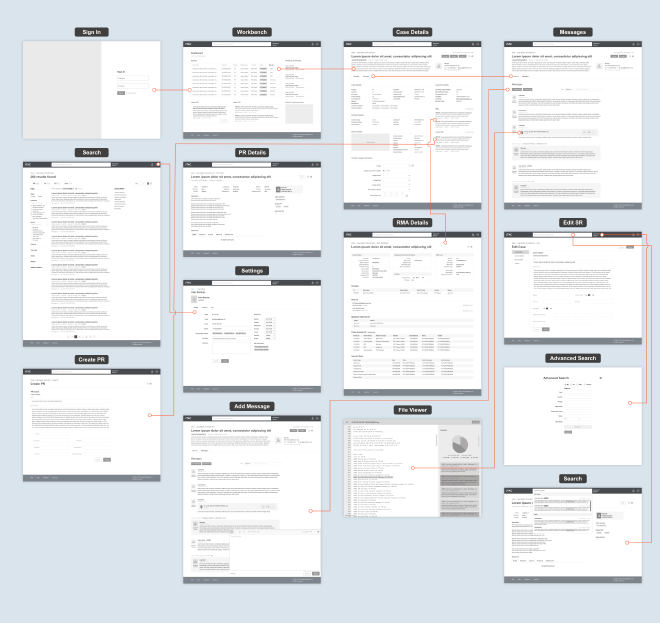

We used storyboarding to visualise real-life scenarios — helping the broader team see how the workbench would change the day of an engineer like Amit. Information Architecture activities explored different navigation structures before committing to a direction.

High-Fidelity Design Solutions

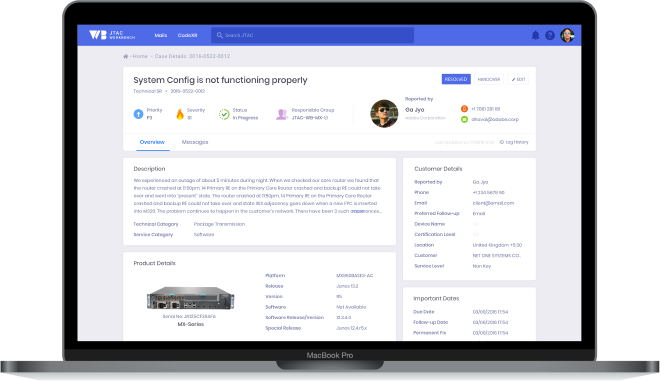

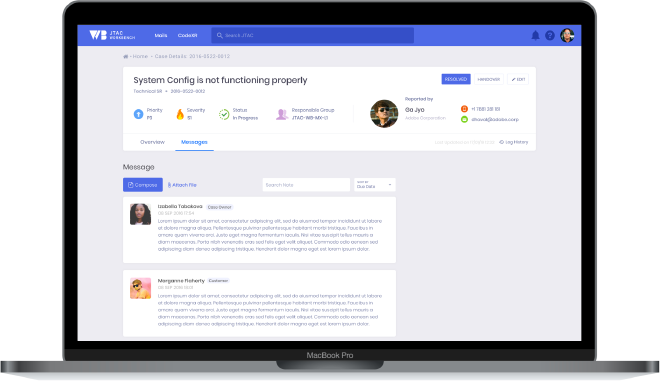

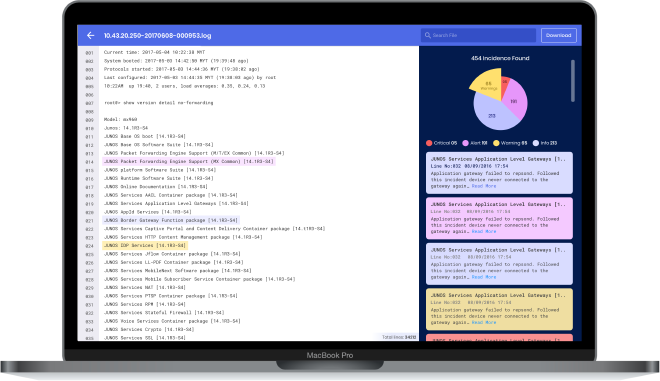

The workbench was designed around four core experiences that addressed the biggest time sinks engineers faced daily.

Results

Outcomes & Impact

Reflection

What I learned

This project reinforced that internal tools deserve the same research rigor as customer-facing products. Field research with support engineers revealed how much context they carry in their head—context that should be externalized into the interface. Storyboarding proved invaluable for making the abstract concrete; suddenly the whole team could see how the workbench would change an engineer's day. I'd start with personas and scenarios earlier in future projects—they ground decisions when there's pressure to move fast.

Interested in working together?

I'm available for freelance design work, consulting, and speaking opportunities.

Get in Touch